What is MLOps going to look like in 2023?

Andreea Munteanu

on 23 January 2023

While AI seems to be the topic of the moment, especially in the tech industry, the need to make it happen in a reliable way is becoming more obvious. MLOps, as a practice, finds itself in a place where it needs to keep growing and remain relevant in view of the latest trends. Solutions like ChatGPT or MidJourney dominated internet chatter last year, but the main question is…What do we foresee in the MLOps space this year and where is the community of MLOps practitioners focusing their energy? Let’s first look back at 2022 and then explore expectations for 2023.

Rewind of MLOps in 2022

2022 was the year of AI. It went from a tool used mainly for experimentation to a tool that promises successful outcomes. The Global AI Adoption Index reported an increasing percentage of enterprises that are likely to try AI, as well as more leaders that have already deployed it, especially in China, India, UAE and Italy. Yet, the challenges are far from solved, as 25% of companies still report a skill shortage or a price that is too high.

Everyone can try AI/ML these days…

ChatGPT made a tour of honour in December 2022 – almost everyone talked about it. It is an advanced chatbot, introduced by OpenAI, that is able to answer complex questions in a conversational manner. It is trained to learn what people mean from what they ask. This means it can give human-quality responses, raising various questions on how disruptive it can actually be. You should try it yourself.

Although it didn’t quite make the headlines like ChatGPT, MidJourney is a research lab that is able to generate visuals based on the description that the user provides, almost in real-time. Their vision is to expand the world’s imaginative powers and their main focus is on design, human infrastructure and AI. The image below shows you what it generated based on this description:

funny, landscape, realistic, 4k, cinematic lighting, 4k post processing, futuristic, symmetrical, cinematic color grading, micro details, reddit, funny, make it less serious and funny

Kubeflow in 2022

Looking back, 2022 was a great year for MLOps. Canonical offers one of the official distributions of Kubeflow, so naturally kept a close eye on the project. Kubeflow had two new releases, 1.5 and 1.6. The community got back together as well and, towards the end of the year, Kubeflow Summit took place. With great sessions and working groups, use cases from companies such as Aurora, and challenges brought to the table, the event energised the community. The Kubeflow Survey 2022 also went out and shed light on the need for more documentation and tutorials, as well as improved installation and upgrades.

The year came with other big surprises for the project. It applied to CNCF in November last year and it’s aiming to become an incubating project.

Canonical MLOps in 2022

With Charmed Kubeflow, our official distribution, Canonical has been closely involved in community initiatives last year. MLOps experts discussed the new releases and published a new tutorial and end-to-end guides. At Canonical, we strongly believe in growing the MLOps ecosystem, so during the last year we made new integrations available, such as MindSpore.

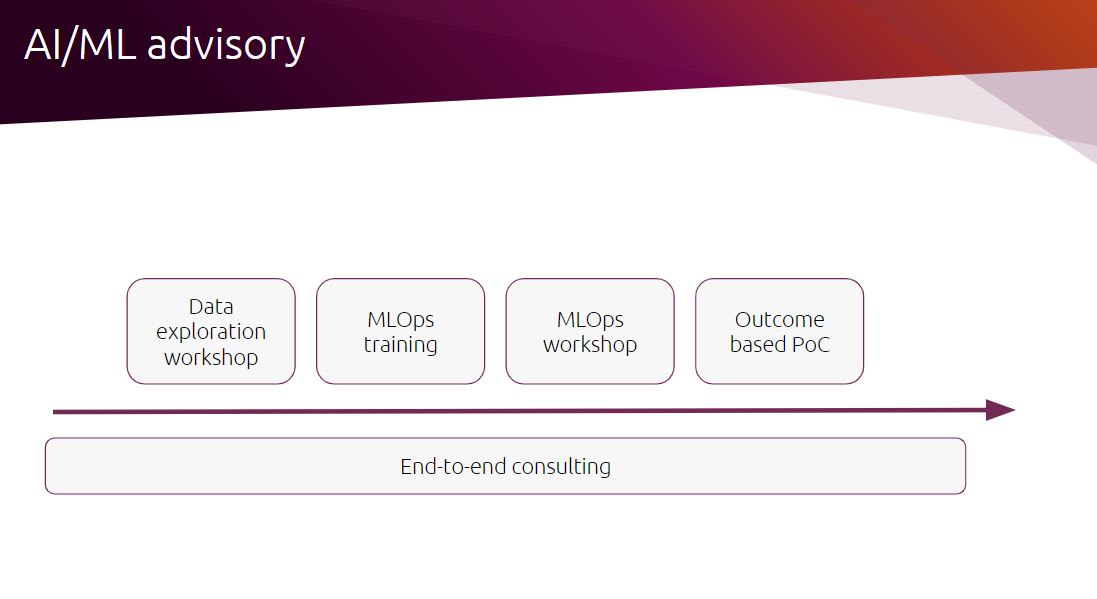

We also launched our new AI/ML advisory lane, which offers companies the chance to develop their MLOps skills and kickstart their AI initiatives, with Canonical’s support.

What’s in store for 2023?

With a clear rise in popularity, MLOps is clearly here to stay and will likely advance to address more complex use cases and compliance needs this year.

Data is at the core of any machine learning project and protecting it should be this year’s topic. In various communities, that has already been brought up and solutions will indeed pop up as well. Protecting pipelines where the data runs, addressing CVEs in a timely manner, offering safe data management, and monitoring data from model or data drift are just some of the topics that the industry is very likely to talk about.

On the other hand, in 2023, MLOps projects should focus on adoption and how to grow it. With documentation that is often incomplete, tutorials that are not handy and installation processes that take much longer than expected, there is a lot to be done, but one step at a time is enough.

For example, the Kubeflow community has already started an onboarding process for new joiners, which aims to help them get up to speed much faster (such that they know where, how and when they can contribute). While this seems small, it answers a big question that the community raised last year during the summit: “How do I get started?” Initiatives like this, as well as a more focus on collateral, will be welcomed, so early adopters can finally get used to MLOps.

The first step is gathering feedback! Fill out our form below:

Your responses will be used for an industry report that will contribute to advancing the community’s knowledge. But don’t worry: your info will be kept anonymous. You can also book a meeting with Charmed Kubeflow’s Product Manager. This is your chance to learn about our roadmap, ask questions and provide your feedback directly.

It’s a wrap…or just a beginning for MLOps in 2023

With 12 months ahead of us, MLOps has plenty of time to surprise everyone. It is, at the end of the day, a collaborative function that comprises data scientists, DevOps engineers and IT. As the market is going to evolve, new roles are going to be added to the list. However, everyone should be focused on an ongoing goal: improving code quality, increasing the rhythm of model development and automating tasks.

New solutions will likely appear on the market, similar to the ones that we mentioned above. Enterprises will probably have higher expectations from machine learning projects, and thus from the tooling behind them. Cost efficiency and time-effectiveness will become more and more important discussions, influencing business decisions related to MLOPs.

Learn more about MLOps

- [Webinar] Retail at the edge with MLOps: market basket analysis

- [Whitepaper] Getting started with AI

- [Whitepaper] A guide to MLOps

- [Blog] Kubeflow pipelines: part 1 & part 2

- What is Kubeflow

Enterprise AI, simplified

AI doesn’t have to be difficult. Accelerate innovation with an end-to-end stack that delivers all the open source tooling you need for the entire AI/ML lifecycle.

Newsletter signup

Related posts

Let’s meet at AI4 and talk about AI infrastructure with open source

Date: 11 – 13 August 2025 Booth: 353 Book a meeting You know the old saying: what happens in Vegas… transforms your AI journey with trusted open source. On...

Run NVIDIA Nemotron 3 Nano Omni locally in a single command

Today, NVIDIA introduced the NVIDIA Nemotron™ 3 Nano Omni, a highly-efficient multimodal model designed to understand and reason across video, audio, images,...

Canonical welcomes NVIDIA’s donation of the GPU DRA driver to CNCF

At KubeCon Europe in Amsterdam, NVIDIA announced that it will donate the GPU Dynamic Resource Allocation (DRA) Driver to the Cloud Native Computing Foundation...