What’s the deal with edge computing?

Adi Singh

on 1 June 2020

Tags: 5g , Edge Computing , EdgeX , Internet of things , IoT

With over 41 billion IoT devices expected to be active by 2027 — that’s at least 5 devices for every person on the planet — edge computing has emerged as a tenable solution to prevent the impending snowballing of network traffic.

Allow me to lift the veil on this buzzword and explain why it’s been gaining attention in tech circles lately.

The concept

IoT devices generate a lot of data. Smart home hubs constantly accrue information on voice commands, ambient noise, and auxiliary device output. Connected security cameras transfer several gigabytes of image data each day. And self-driving cars will, in all likelihood, be gathering hundreds of terabytes of data each year.

The idea of edge computing is to process all this data at the location it is collected. Data that is only of ephemeral importance can (and should) be crunched on the device itself. This is in contrast to cloud computing, where data is sent to massive, far-away compute warehouses for processing.

Despite steady innovation in communication technology, traditional cloud computing is struggling to cater acceptable response times for devices operating at the fringes of a network. The rapid increase in IoT device adoption would also choke the bandwidths of existing network infrastructures.

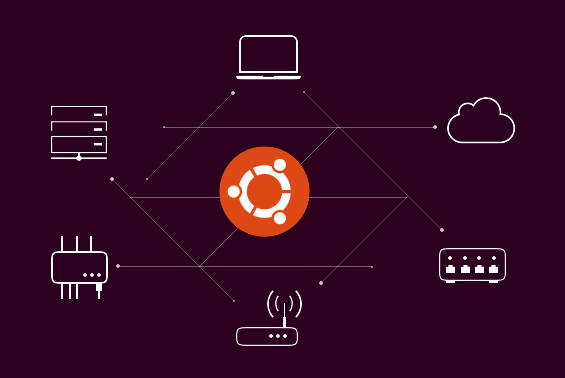

Edge computing tries to exploit chipsets at or close to the data source — like those in smart appliances, mobiles phones and network gateways — for performing computational tasks instead of aggregating all that work in a central cloud. This territorial proximity to the endpoint is good for both latency and efficiency, and it saves networks from unnecessary congestion.

The benefits

So why all the hype? There are three key reasons why edge computing has become so popular in tech circles.

Furiously fast

The biggest advantage of an edge computing architecture is speed. For all the advancements in network engineering, physical distance still remains a key determinant in how quickly a server can process a user request. Waiting for data packets to travel to the cloud server, wait in a queue for crunching, and then travel back to the device with the right response is simply too prolonged a process.

Several IoT use-cases cannot afford such delays. Fast-moving manufacturing robots, heavy mining machinery, smart grids managing traffic, and self-driving cars depend on virtually immediate responses to raw data for functioning safely and effectively. Leveraging device resources for data processing eliminates network latency from the equation and thus enables critical real-time applications, even in areas with spotty internet availability.

At other times, the effects of speed are less critical to safety but are important for businesses anyway. Processing network requests even a couple of seconds faster demonstrably improves end-user experience, and gives products a competitive advantage.

Google processes image data from its Pixel smartphones directly on the device, allowing for snappier and more seamless camera interfaces. And AWS offers a globally distributed content delivery network that caches media files at servers located closest to consumers, thus improving application loading speed.

Patently private

Another benefit to keeping data at the edge is private. Many users get understandably uncomfortable sending their personal data to remote data stores which they cannot see or control. And transferring user data over (even secure) networks makes it vulnerable to theft and distortion.

These concerns can often be addressed by guaranteeing that a user’s personal data never leaves the local device. Most famously, Apple jettisoned a bunch of criticism about its security policy by storing and authenticating a user’s biometric information entirely on their edge device.

Conveniently cheap

And finally, there is a cost advantage to the edge paradigm. Moving processing resources to the network periphery is one of the few practical, cost-effective ways to handle the deluge of data springing from the predicted exponential increase in IoT usage.

In accordance with Moore’s Law, small devices at the edge have become more computationally powerful. At the same time, costs associated with transferring and storing huge amounts of data have remained pretty much constant. If the trend is to continue, it is only inevitable that switching to an edge infrastructure would be a much cheaper solution for business enterprises in the long run than current cloud architectures.

Chipmakers are innovating fiercely in this field, releasing high-end GPU architectures that can handle increasingly complex operations at the edge. Just last year, Nvidia and Qualcomm respectively launched EGX and Vision Intelligence Platforms for enabling low-latency AI at the edge.

The 5G factor

The pressing need for 5G in high growth areas like fully self-driving cars, real-time virtual reality experiences, and high-end multiplayer online gaming is further fueling innovation around edge computing. 5G promises to significantly improve application performance across the board by offering 10x faster data rates than existing 4G networks.

Edge computing remains a fundamental enabler of 5G, and is one of the rare mechanisms that can viably meet the speed, scale and safety requirements of 5G standards. Processing data streams at the edge can drop buffering times to virtually zero. Keeping customer data on the devices boosts security by reducing avenues for remote hacking. And the cost characteristics of edge computing allow companies to economically maximise the increase in connections that 5G networks can handle.

Strategically leveraging edge services can thus help network providers obtain a first-mover advantage in the disruptive 5G arena.

The framework

Distributed edge infrastructures can quickly get out of hand. One needs a set of tools to easily deploy, manage and optimise network architectures with as little effort as possible. An open-source framework like EdgeX Foundry running on Ubuntu is one of the quickest ways to start with edge computing.

Frameworks are a great way to begin exploring a new technical concept. Launched in April 2017, EdgeX provides a platform for developers to build custom IoT solutions. The framework is essentially a collection of core microservices that provide a set of standard APIs which allow developers to easily build their applications, leveraging capabilities for local analytics, data filtering, transformation and export to the cloud.

EdgeX released its latest version, nicknamed Geneva (v1.2), earlier this month, which boasts more robust security, optimised analytics, and scalable connectivity across multiple devices.

The outro

It is a given at this point that IoT depends on well-performing edge architectures to realise its full potential. But like many buzzwords, edge computing repackages a mature technical concept into a fashionable soundbite. Before the hype around cloud computing, most data processing did take place at the data source. That is, at what we now call the edge.

So in a way, we are returning to a bygone paradigm to address the present limitations of the cloud. But this time, armed with novel tools like embedded OSs, edge gateways and single node clusters, we are better prepared to tackle the problem of scalable computing.

Open source is what we do

We believe in the power of open source software. Besides driving projects like Ubuntu, we contribute staff, code and funding to many more.

Newsletter signup

Related posts

Canonical at India Mobile Congress 2024 – a retrospective

With an ambition to become Asia’s technology hub for telecommunications in the 5G/6G era, India hosts the annual India Mobile Congress (IMC) in Pragati...

Canonical Kubernetes officially included in Sylva 1.5

Sylva 1.5 becomes the first release to include Kubernetes 1.32, bringing the latest open source cloud-native capabilities to the European telecommunications...

Real-time OS examples: use cases across industries

In sectors where precision and predictability are non-negotiable, timing is everything. Whether coordinating robotic arms on a factory floor, maintaining...