Deploying Kubernetes Locally – MicroK8s

anaqvi

on 25 September 2019

Tags: Deployment , kubectl , kubernetes , MicroK8s

This is the second part of our introduction to MicroK8s. In the previous blog, we introduced MicroK8s, went over some K8s basic concepts and showed you how fast and easy it is to install Kubernetes with MicroK8s — it’s up in under 60 seconds with a one-liner command. In this blog, we dive deeper to discuss the add-ons available in MicroK8s and show you how to deploy pods in MicroK8s.

MicroK8s Add-ons

MicroK8s comes packed with carefully selected add-ons below to power and amplify your Kubernetes productivity. These bundled add-ons work out of the box with MicroK8s saving you valuable developer time and deployment complexities. K8s (Kubernetes) components that would take hours, at best, to configure with your deployment are up and running with a few one-liners in MicroK8s.

- DNS: Deploy DNS. This add-on may be required by others, thus we recommend you always enable it.

- Dashboard: Deploy Kubernetes dashboard as well as Grafana and influxdb.

- Cilium: Leverage enhanced networking features, including Kubernetes NetworkPolicy powerful pod-to-pod connectivity management and service load balancing between pods through Cilium.

- Helm: allows you to manage, update, share and rollback Kubernetes applications.

- Storage: Create a default storage class. This storage class makes use of the hostpath-provisioner pointing to a directory on the host.

- Ingress: Create an ingress controller.

- GPU: Expose GPU(s) to MicroK8s by enabling the nvidia-docker runtime and nvidia-device-plugin-daemonset. Requires NVIDIA drivers to be already installed on the host system.

- Istio: Deploy the core Istio services. You can use the microk8s istioctl command to manage your deployments.

- Knative: Knative serving, eventing, monitoring for your MicroK8s.

- Registry: Deploy a Docker private registry and expose it on localhost:32000. The storage add-on will be enabled as part of this.

All of these add-ons can be enabled or disabled using the microk8s enable and microk8s disable command respectively.

MicroK8s commands

- microk8s status: Provides an overview of the MicroK8s state (running / not running) as well as the set of enabled add-ons

- microk8s enable <add-on name>: Enables add-on

- microk8s disable <add-on name>: Disables add-on

- microk8s kubectl: Interact with Kubernetes

- microk8s config: Shows the Kubernetes config file

- microk8s istioctl: Interact with the Istio services; needs the Istio add-on to be enabled

- microk8s inspect: Performs a quick inspection of the MicroK8s installation

- microk8s reset: Resets the infrastructure to a clean state

- microk8s stop: Stops all Kubernetes services

- microk8s start: Starts MicroK8s after it is being stopped

MicroK8s in action

Let’s dive deeper into MicroK8s usage. We’ll go over accessing the dashboards in MicroK8s, deploying K8s pods and managing and accessing the cluster through the dashboard.

Note: You need admin access to use Microk8s.

First, let’s go through accessing the dashboard. Enable DNS and dashboard add-ons using:

microk8s enable dns dashboardYou can check the status of Microk8s and add-ons by running:

microk8s statusCheck the deployment progress of our add-ons with

microk8s kubectl get all --all-namespacesIt only takes a few minutes to get all pods in the “Running” state and you should see a similar output as below.

From this output, we can see the kubernetes-dashboard service in the kube-system namespace has a ClusterIP of 10.152.183.64 and listens on TCP port 443. The ClusterIP is randomly assigned, so if you follow these steps on your host, your IP address might differ, make sure you replace the IP address in these instructions with your cluster IP. Visit https://10.152.183.64:443 and you should be able to see the Kubernetes dashboard. To access the dashboard use the default token retrieved by running the commands:

token=$(microk8s kubectl -n kube-system get secret | grep default-token | cut -d " " -f1)microk8s kubectl -n kube-system describe secret $tokenYou can also use the command below to see the API server proxies for the dashboards.

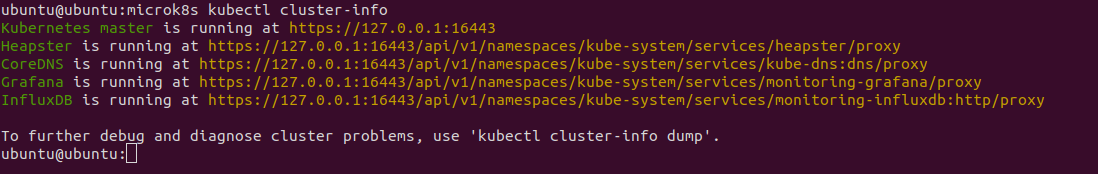

microk8s kubectl cluster-infoYou should see a similar output as below.

We can visit these proxies to access the corresponding dashboard. To visit our Grafana dashboard we visit https://127.0.0.1:16443/api/v1/namespaces/kube-system/services/monitoring-grafana/proxy in our browser. Fetch the username and password using the command:

microk8s configDeploy a service on Kubernetes

There won’t be much activity on the dashboard since we haven’t deployed anything yet. Let’s get to that, let’s deploy our first K8s service using MicroK8s.

We will create a microbot deployment with two pods via the kubectl cli using the following command:

microk8s kubectl create deployment microbot --image=dontrebootme/microbot:v1ARM users need to use the following command:

microk8s kubectl create deployment microbot --image=cdkbot/microbot-arm64:latestAdd replicas of the deployment:

microk8s kubectl scale deployment microbot --replicas=2Create a service to expose the deployment:

microk8s kubectl expose deployment microbot --type=NodePort --port=80 --name=microbot-serviceNow we should be able to see our service running in the cluster info, run the command:

microk8s kubectl get all --all-namespaces

We can see our pods and service running now. We can access the service using the cluster IP or since our service is of type NodePort, we can also access it on a port on the host machine. The port is randomly selected and in our case, it is 31553. We can visit http://localhost:32648 in our browser and access our deployment.

And there we have it, our Kubernetes up and running! Take it for a spin, run some experiments, try to break stuff. And, if you come across any bugs, glitches or think of features you’d like to see in MicroK8s hit us up on Github, Kubernetes forum, Slack (#microk8s channel) or tag us @canonical, @ubuntu on Twitter (#MicroK8s).

What is Kubernetes?

Kubernetes, or K8s for short, is an open source platform pioneered by Google, which started as a simple container orchestration tool but has grown into a platform for deploying, monitoring and managing apps and services across clouds.

Give your platform the deep integration it needs

Canonical Kubernetes optimises your systems for any cloud, on a per-cloud basis. Maximise performance and deliver security and updates across your whole cloud. Per-cloud optimisations for performance, boot speed, and drivers on all major public clouds Out-of-the-box cloud integration with the option of enterprise-grade commercial support.